While ChatGPT has undoubtedly revolutionized the landscape of artificial intelligence, its capabilities come with a shadowy side. Programmers may unknowingly fall prey to its coercive nature, unaware of the risks lurking beneath its friendly exterior. From generating fabrications to perpetuating harmful prejudices, ChatGPT's dark side demands our caution.

- Moral quandaries

- Privacy concerns

- The potential for misuse

ChatGPT: A Threat

While ChatGPT presents fascinating advancements in artificial intelligence, its rapid integration raises serious concerns. Its ability in generating human-like text can be manipulated for malicious purposes, such as creating false information. Moreover, overreliance on ChatGPT could hinder innovation and blur the lines between truth. Addressing these challenges requires a multi-faceted approach involving regulations, consciousness, and continued research into the implications of this powerful technology.

The Dark Side of ChatGPT: Unmasking Its Potential Dangers

ChatGPT, the powerful language model, has captured imaginations with its extraordinary abilities. Yet, beneath its veneer of innovation lies a shadow, a potential for harm that demands our critical scrutiny. Its flexibility can be weaponized to disseminate misinformation, craft harmful content, and even impersonate individuals for devious purposes.

- Additionally, its ability to evolve from data raises concerns about prejudice in algorithms perpetuating and intensifying existing societal inequalities.

- As a result, it is essential that we develop safeguards to address these risks. This requires a holistic approach involving developers, researchers, and ethical experts working collaboratively to ensure that ChatGPT's potential benefits are realized without jeopardizing our collective well-being.

Negative Feedback : Highlighting ChatGPT's Flaws

ChatGPT, the lauded AI chatbot, has recently faced a wave of scathing reviews from users. These feedback are exposing several weaknesses in the system's capabilities. Users have expressed frustration about misleading responses, biased answers, and a absence of real-world understanding.

- Numerous users have even claimed that ChatGPT generates plagiarized content.

- This backlash has raised concerns about the reliability of large language models like ChatGPT.

Therefore, developers are currently grappling with address these issues. The future of whether ChatGPT can adapt to user feedback.

Can ChatGPT Be Dangerous?

While ChatGPT presents exciting possibilities for innovation and efficiency, it's crucial to acknowledge its potential negative impacts. A key concern is the spread of untrue information. ChatGPT's ability to generate convincing text can be exploited to create and disseminate fraudulent content, eroding trust in sources and potentially inflaming societal divisions. Furthermore, there are concerns about the consequences of ChatGPT on education, as students could depend it to produce assignments, potentially hindering their growth. Finally, the automation of human jobs by ChatGPT-powered systems presents ethical questions about career security and the necessity for reskilling in a rapidly evolving technological landscape.

Delving Deeper: The Shadow Side of ChatGPT

While ChatGPT and its ilk have undeniably captured the public imagination with their read more astounding abilities, it's crucial to consider the potential downsides lurking beneath the surface. These powerful tools can be susceptible to biases, potentially perpetuating harmful stereotypes and generating untrustworthy information. Furthermore, over-reliance on AI-generated content raises questions about originality, plagiarism, and the erosion of human judgment. As we navigate this uncharted territory, it's imperative to approach ChatGPT technology with a healthy dose of caution, ensuring its development and deployment are guided by ethical considerations and a commitment to responsibility.

Edward Furlong Then & Now!

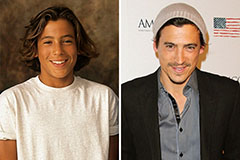

Edward Furlong Then & Now! Andrew Keegan Then & Now!

Andrew Keegan Then & Now! Keshia Knight Pulliam Then & Now!

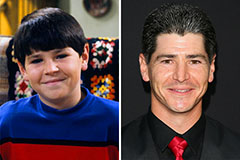

Keshia Knight Pulliam Then & Now! Michael Fishman Then & Now!

Michael Fishman Then & Now! Kerri Strug Then & Now!

Kerri Strug Then & Now!